Smart regulation: The gateway to frontier innovation

Executive Summary

When it comes to the frontier technologies that shape an economy’s development trajectory, information and communications technologies (ICT) regulators have an unenviable task across three challenges:

- Keeping up with the breakneck pace of change that creates new use cases each day.

- Protecting promising players while leveling the playing field for newcomers.

- Supporting new technologies while curbing lax policies that can lead to ethical breaches.

With frontier technologies poised to grow exponentially in the next five years (US $350 billion to $3.2 trillion by 2025),[1] the payoff has never been higher. And neither have the risks. Ill-conceived regulatory approaches can turn the frontier into a wasteland: too harsh, and you stifle innovation; too soft, and you risk moral hazard that disqualifies participation in the global game. Surprisingly enough, the key to successfully gold-mining frontier technologies lies in a thoughtful, proactive approach to regulation; that is, an open and overarching regulatory model to address these new technologies and competitive dynamics, built upon a strong understanding of specific use cases.

1

Frontier technologies show strong promise

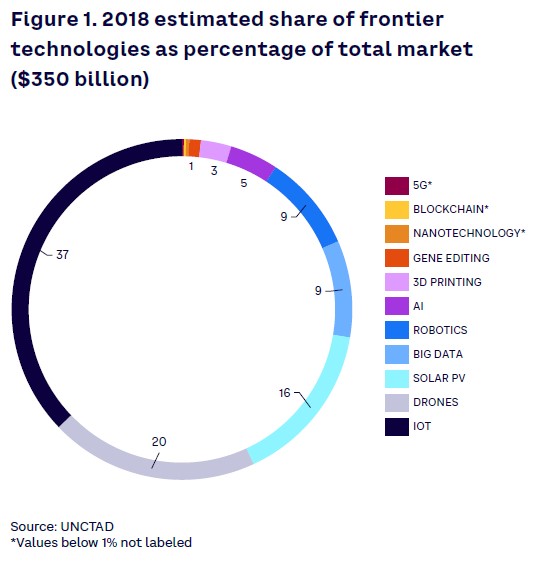

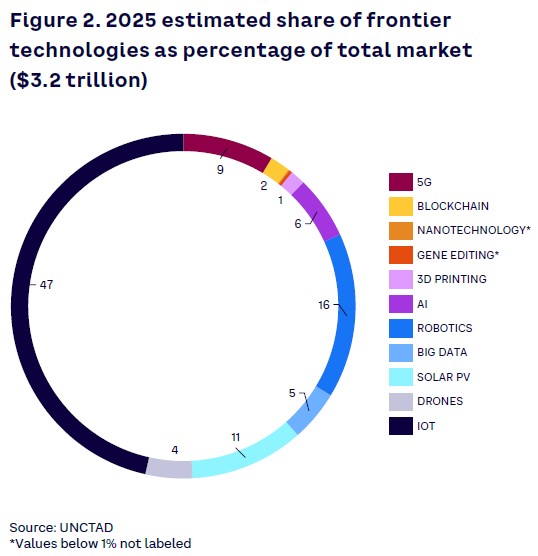

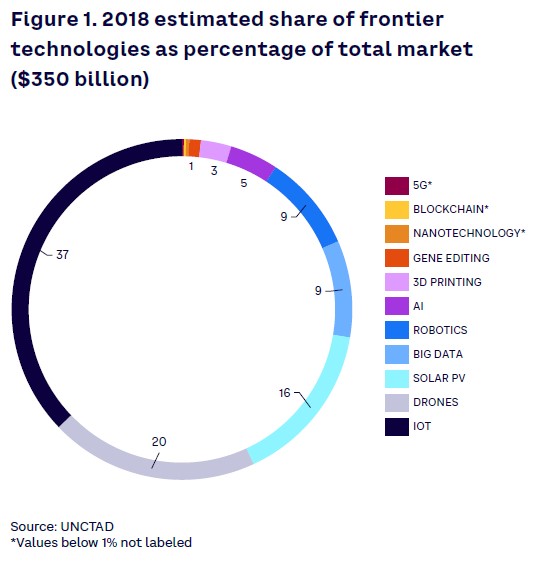

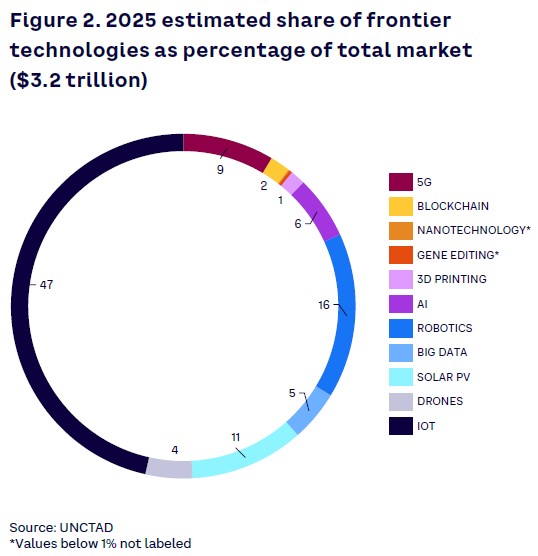

Leveraging ubiquitous gigabit connectivity and widespread digitalization, frontier technologies represent key building blocks for current and near-future innovation. As defined by UNCTAD, the 11 frontier technologies include artificial intelligence (AI), Internet of Things (IoT), big data, blockchain, 3D printing, robotics, drones, gene editing, 5G, nanotechnology, and solar photovoltaic (PV). As highlighted in Figures 1 and 2 , UNCTAD reports that the combined market size of frontier technologies is expected to grow close to tenfold from 2018 to 2025, going from $350 billion to $3.2 trillion. These technologies must be developed and combined to unlock new possibilities across various industry sectors (e.g., robotics in healthcare, blockchain in finance, AI in transportation).

While frontier technologies seem to open vast opportunities for countries that take a lead in their development, there are also potential risks and downsides that countries need to address. Overall, governments must be proactive in understanding these technologies and finding the right balance between promotion and regulation. Only a considered approach will allow for safe and privacy-respecting innovation while ensuring continued harmonization with other global technology leaders.

Historically, governments and regulators that have embraced a more proactive approach to understanding emerging technologies and created a framework that promotes the safe development of such technologies have allowed their countries to close the technological gap or to increase their lead.

As an example, in 1950s Japan, proactive policy and regulation from the Ministry of International Trade and Industry (MITI) enabled the country to move to the forefront in the emerging analog electronics industry by leveraging technology transfers from US companies. A few decades later, the US managed to retake and consolidate its lead in developing digital technologies, thanks to ambitious policies pushed by extensive regulatory and financial support from multiple government entities, including the Defense Advanced Research Projects Agency (DARPA). In contrast, governments unable to adapt their regulatory approach to new technologies often ended up impeding innovation, whether in regard to ICT or for more traditional industries.

Drone regulations in the US illustrate a progressive shift over the past years from an extension of aircraft regulations to specifically designed rules. In 2016, the Federal Aviation Administration (FAA) defined various operational limitations and rules for drone usage while also granting exemptions for companies to operate drones for various commercial use cases. This shift helped overcome a regulatory framework that was inadequate and likely to impede the development of drone-enabled services. Consequently, the new regulation enabled the technology and the associated use cases to grow while ensuring advancements respected the safety and security of all.

In contrast, countries that have implemented effective bans on drone usage with no plans to build a more progressive regulatory framework will likely miss out on the ever-changing development of a technology that can have significant positive impact on the economy as well as on consumer welfare.

All frontier technologies are impacted by the regulatory framework that they must follow. However, technologies with the largest estimated market size as well as those that can serve as enablers and accelerators to other technologies are likely to be impacted the most. Thus, there’s a crucial need for a stronger understanding and adaptability from regulators. Technologies like AI, big data, and IoT can be developed for standalone use cases while supporting the growth of other technologies, such as drones, robotics, or 3D printing, and should therefore be subject to a more holistic approach from regulators that considers the key role these technologies can play in unlocking the true potential of other technologies.

2

Challenges of adopting emerging technologies

While frontier technologies are key enablers for innovation and technological progress, the ability to develop and leverage such technologies varies greatly across countries. The key readiness differentiators include ICT deployment, overall skills and capabilities, R&D activities, industry activity, and access to finance.

These disparities in technological readiness emerge from innovation having exponentially amplified inequalities between countries since the Industrial Revolution. Innovation builds upon previously developed technology to unlock the potential that progressively enhances the linkages and dependencies between new technologies. At the same time, this cumulative effect can also be seen in the development of intergenerational inequalities, as newer generations born in areas with low innovation potential find it more difficult to build on newly developed technology to innovate further, making it much more difficult for people already subject to inequalities (from previous frontier technologies and their use cases) to close the gap.

While inequalities within countries have decreased over the last century, inequalities between countries represented 85% of global inequality at the beginning of the 21st century (up from 28% in 1820), according to UNCTAD. This evolution is caused mainly by the exponentially growing gap in GDP per capita and disposable income between countries at the forefront of innovation and those lagging behind in terms of the emergence of new technologies. As emerging technologies play a more prominent role in the global economy, we expect this inequality gap to continue to widen.

As a result, this cumulative aspect of new technology development raises various challenges that countries must address to fully benefit from the opportunities while mitigating their potential downsides.

Regulatory challenges

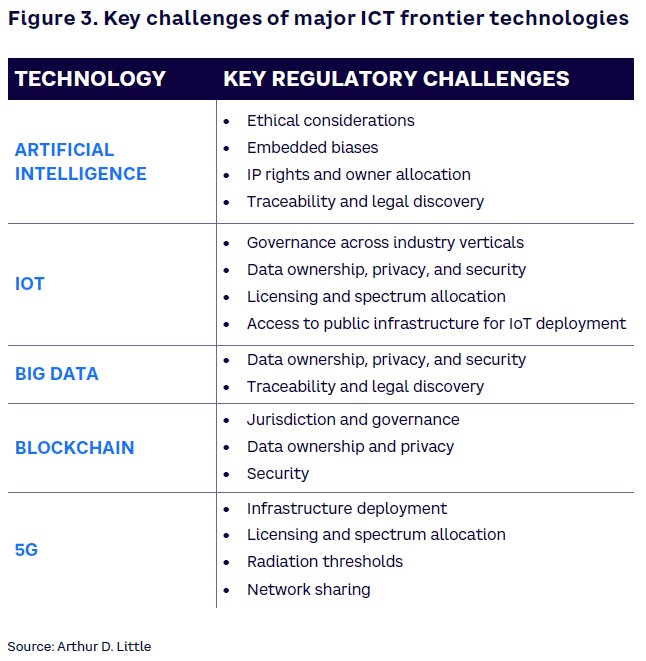

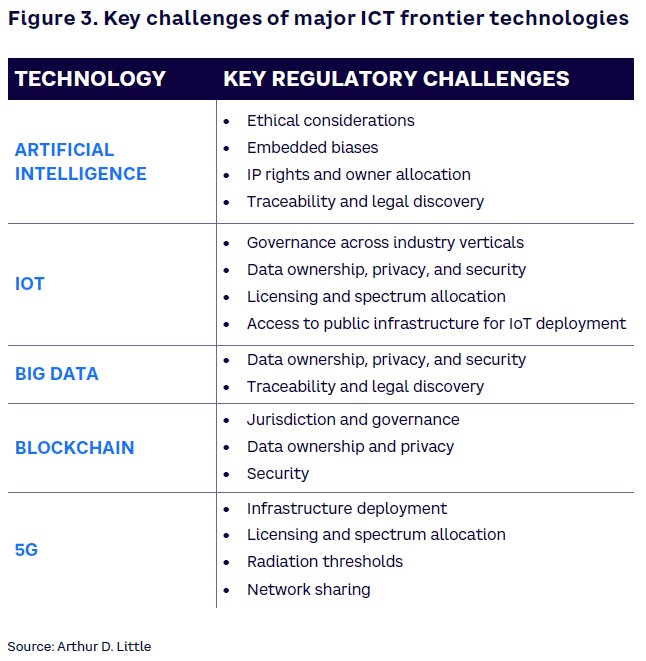

The first set of challenges technology adoption raises relates to regulation. With the boom of digitalization and connectivity, concerns about potential privacy, security, and ethics breaches from technology companies and products are becoming more and more worrisome for individuals and governments. Designing and implementing efficient technology regulation is particularly complex because it requires constant adaptation to newly developed use cases and applications. Emerging technology also enables new disruptive business models, often leading to challenges with accountability, ethics, and monitoring. Key regulatory challenges for the five major ICT frontier technologies are illustrated in Figure 3.

Some of these challenges are already known and have been addressed to some extent by regulators. In 2016, for example, the European General Data Protection Regulation (GDPR) was one of the first significant actions taken by multiple EU member states in an attempt to better protect privacy and to guarantee technology companies made more ethical use of personal data.

Let’s examine AI, a current key focus for regulation/nonregulation, a bit deeper. This particular technology rapidly transforms the way individuals live, work, and recreate; businesses serve customers and create shareholder wealth; and governments deliver public services. Additionally, AI can play a significant role in maintaining the competitive strength of a leading nation on the global stage while offering an opportunity for challengers to get ahead. Although AI is developing quickly and the benefits are clear, governments are unsure about how to manage its challenges and risks.

Moreover, even though the ethical use of AI is a well-established global principle, there are no clear ways to penalize nonconformance. Legal compliance regarding AI and the data that fuels it is another crucial challenge, especially with different countries having different data regulations. Protecting IP rights and appropriately allocating ownership and use rights in the components of AI can be difficult because traditional IP approaches are based on human creation. Only select leading countries (e.g., the US, Singapore) so far have published formal stands or views on how to address AI regulation.

Unlike traditional software or technology licensing, AI has several unique components that must be addressed differently, including AI solutions, training data, production data, AI output, and AI evolutions. For each AI component, the following considerations take on high importance:

- Who is providing the component?

- Who will use the component?

- How will the component be used?

- Who owns the component?

EU’s approach toward AI is guided by a European strategy and a high-level expert group. The European Commission has published ethics guidelines for trustworthy AI [2] and has followed up with policy and investment recommendations.[3] The commission differentiates between “high-risk” and “non-high-risk” AI applications, with only the former in the scope of a future EU regulatory framework. The commission considers a set of key requirements for high-risk AI applications regarding training data conditions, record-keeping, informational duties, human oversight, and specific requirements for specific AI applications. The commission has put large fines in place for companies that violate the law.

Many challenges associated with such emerging technologies are yet to be discovered and understood. Part of the innovation is self-evolutionary and will leverage AI to learn and improve over time. However, AI is not without flaws, and initial biases in developing the underlying models and algorithms can have major repercussions. An illustration of such a threat is the racial or gender bias that has been embedded, consciously or unconsciously, in AI trainings. Such bias has resulted in higher chances of discrimination for certain populations that, for example, were underrepresented in the training data. The longer an AI algorithm is running, the more complex it becomes to identify causality links and potential flaws in its approach.

Optimal emerging technology regulation must not only be reactive to any innovation in the sector but must also provision for potential upcoming ethical threats. Keeping pace with cumulative machine learning progress as well as settling multiple moral dilemmas will be at the core of regulators’ work, allowing for a deep and complete understanding of how various emerging technologies work and where to draw a clear boundary between what is or is not ethically acceptable.

Whenever a choice must be made between various courses of action leading to outcomes with different consequences, such as identifying what behavior an autonomous vehicle should adopt in an imminent crash situation, regulators will have to tackle these questions to ensure that all future technology is developed on building blocks previously in line with key ethical concerns.

Socioeconomic challenges

The second set of challenges and requirements relates to the socioeconomic aspects of emerging technology. Technology innovation can have significant social benefits for those who can access it. This also means that unequal access to emerging technology will directly impact social equity, as it might exclude certain groups of individuals from the benefits the technology provides.

Companies can also take advantage of these differences in access, as some competitive spaces can be more easily captured and maintained if a particular player has better access to an emerging technology, leading to a dysfunctional market and risks of monopoly, duopoly, or oligopoly. Thus, while innovation should secure enough market advantage to encourage R&D, technology regulators must also ensure that it does not provide regulation asymmetries among players and/or a long-term advantage for leading companies in a way that new entrants cannot respond through adequate means.

3

Regulation approach on case-by-case basis

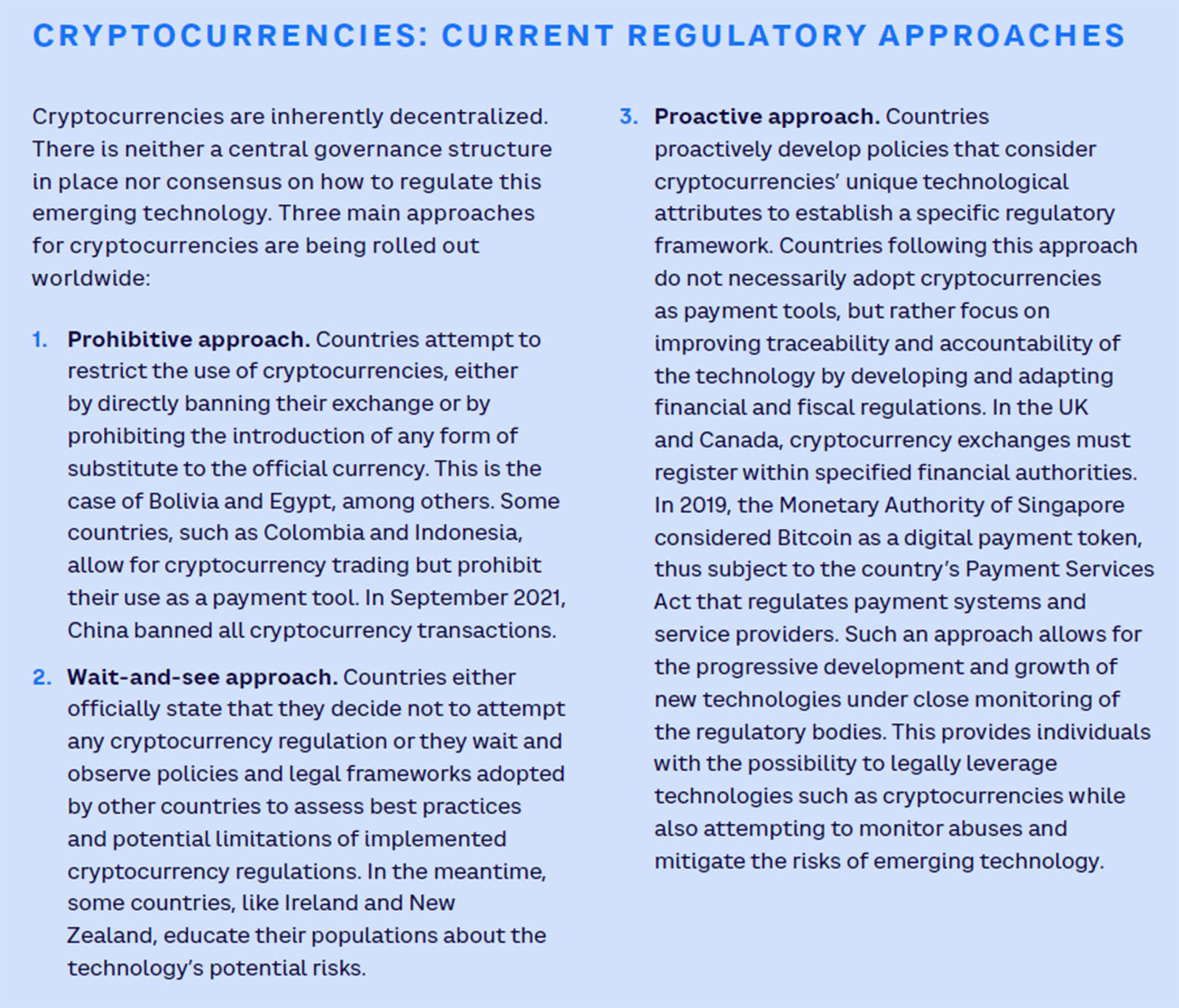

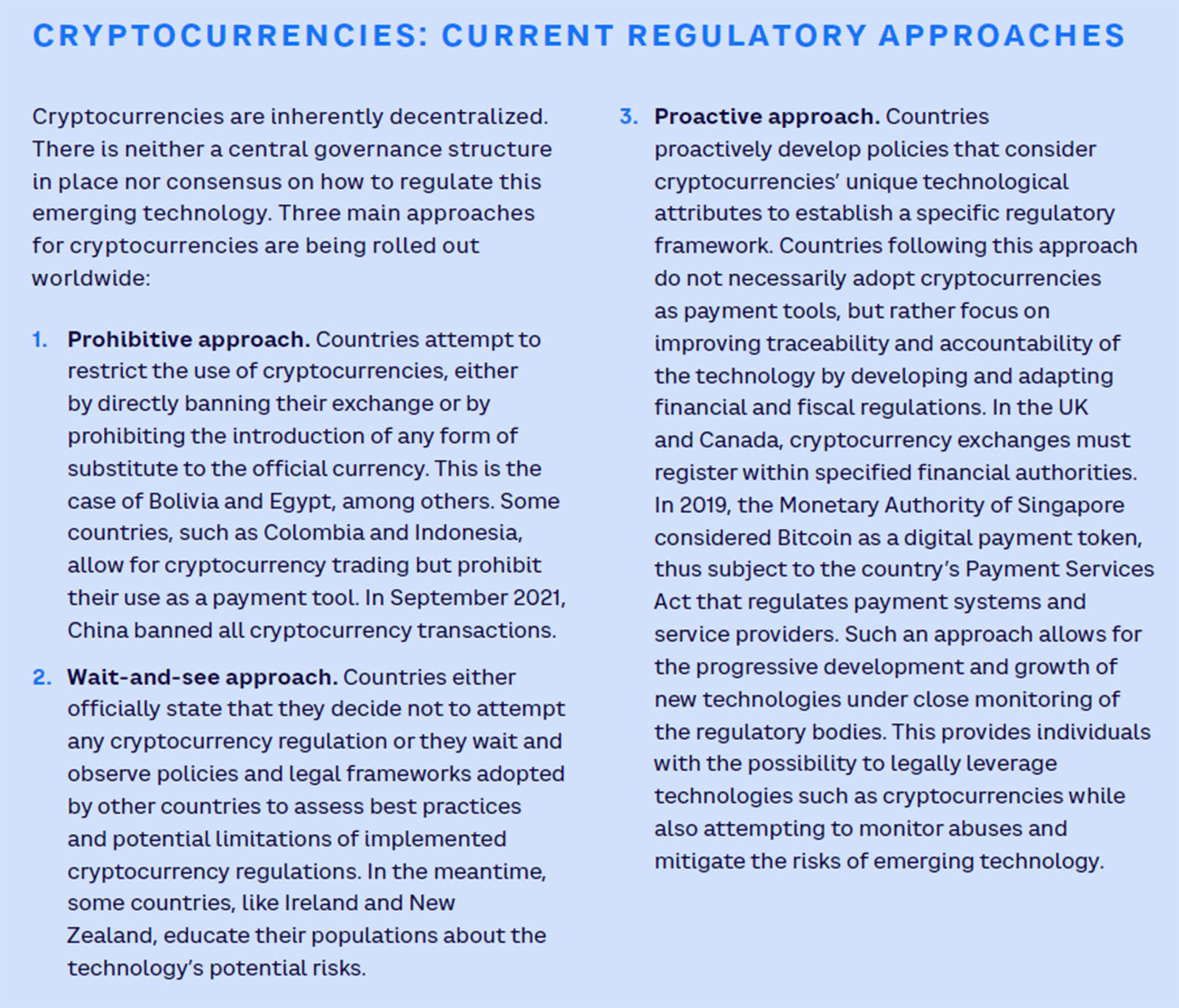

Different approaches exist across the spectrum of regulatory policies that ICT regulators have introduced (e.g., see “Cryptocurrencies: Current regulatory approaches”), including the following three:

- Prohibitive approach (compliance-based)

- Wait-and-see approach (event-based)

- Proactive approach (risk-based)

As new technology is geared toward decentralization, providers and even users can expect emerging technologies to be largely self-regulated. However, as detailed above, lack of intervention from a regulatory entity can lead to multiple pitfalls that would be detrimental not only for users but also for the economy as a whole. In light of this, we believe that the most efficient approach to regulate emerging technologies is the proactive (risk-based) option.

Risk-based regulation identifies and assesses the risks associated with specific practices or technology use cases and takes them into account when elaborating and enforcing regulations to efficiently prepare and protect against technology threats without impeding technology emergence. The following are the key risks that regulators must assess: the impact on individual and public safety, social welfare, the wider business environment, existing regulation, and the capacity to minimize potential threats of emerging technology use cases.

Interest in risk-based regulation often grows from a larger commitment to incorporating rigorous analysis into regulatory decisions. Regulators around the world now use regulatory impact assessment or cost-benefit analysis to structure decision making and anticipate the consequences of different regulatory options. This risk-based approach to emerging technology regulation is one of the dimensions of an agile regulatory framework that can help governments safely and efficiently unlock new technologies’ potential. This framework’s key foundation is clarity in context, vision, and objectives. This foundation can be secured through three main dimensions:

- An evolutionary and agile approach to regulation. Regulators must acknowledge the fast-paced evolution of emerging technologies, as well as the interconnection of various technologies, that allows for the development of new technology use cases whose implications can only be fully understood once deployed. This requires a shift from “regulate and forget” to a more responsive and iterative approach.

- Leading and coordinating policy. Emerging technology use cases span all industries, and technology regulators must embrace a coordinating role between the relevant government entities and private stakeholders to fully understand and monitor the impact of new technology across sectors and effectively regulate it.

- Committed implementation. Regulators should not only frame regulations but should also take an active role in implementing technologies within the country.

Depending on the risk assessment performed, regulators can then leverage all relevant tools to develop an optimal regulatory framework for any emerging technology use case. As an example, risks of biases in AI leading to higher discrimination can come from either the data set used or from the developed algorithm’s architecture. Enforcing the independent auditing of selected data, mandating publication of test results, or implementing a framework for data management can be efficient regulatory tools to address and minimize the risk of discrimination without hindering the development of AI technology.

Where risks cannot be quantified, an evolutionary approach using sandbox-based regulations is another option. Sandboxes create a space where innovation actors can develop new technology use cases under a regulator’s supervision, allowing for safe experimentation as well as knowledge transfers between private companies and regulators. In the early stages of digital banking development, for example, multiple financial regulators followed a sandbox approach to better understand the potential risks of such technology and address them up front instead of risking a large-scale threat in the financial markets.

4

Paradigm shift for regulators

Adopting a framework such as the one described above is a key step toward the move from traditional regulators to ambidextrous regulators that learn from the past and current contexts to understand technology advancement more fully along with its potential risks, while also looking ahead to better prepare for emerging technologies.

This requires a paradigm shift in the regulator’s perspective in order to embrace a more proactive regulatory role as well as a new technology promotion role. Regulators shifting to a more responsive and iterative approach must prototype and test new approaches through regulatory sandboxes and accelerator development at the national level and via more collaborative regulation on the global level. Regulators must also develop internal capabilities and support deployment of infrastructure to remain up to date in terms of technology foresight.

With increased complexity, regulators must evolve and update their traditional model to consider:

- A wider range of digital services, especially use cases where more than one industry comes into play.

- A larger scale of players, especially those that are large enough to have a significant impact on an entire ecosystem, including the success or failure of other players.

- New regulatory issues emerging from novel technological use cases and business models.

The regulatory model of the future should consider:

- A broader set of legal fundamentals (e.g., content moderation, privacy and data protection, bias/fairness, explainability, norm setting, existential risk).

- A different method that focuses on services rather than infrastructures, avoiding the creation of asymmetries between old and new players and between different industries and ensuring a larger level playing field.

- An “open” model, including a call for inputs from any stakeholder (e.g., consumers, players from other industries).

- A case-by-case regulation, operationalized through a 360-degree assessment and possibly a categorization of use cases that strikes a fine balance between promoting innovation and increasing administrative workloads.

Countries like Singapore are among the global leaders developing forward-looking regulatory frameworks for new technologies. While multiple advanced countries are considering more stringent regulatory frameworks for cryptocurrencies, Singapore has accurately assessed the strategic and economic opportunity represented by the underlaying blockchain technology. As a result, the city-state developed an ambitious regulatory framework aiming to monitor the risks associated with cryptocurrencies without hindering the technology’s development, allowing Singapore to strengthen its position as a global financial innovation hub.

The recently published AI strategy by the UK government that outlines its plan to make Britain a global AI superpower sets out an agenda to build the most “pro-innovation regulatory environment in the world.”[4] The strategy addresses concerns over the novel ways AI can introduce bias into decision making, assesses whether sector-by-sector AI regulation should continue or make way for cross-sector regulation, and pilots an “AI Standards Hub.”

Conclusion

Like all previous major innovative breakthroughs, frontier technologies and their underlying use cases present an opportunity for countries to close the gap or to increase their lead over others. However, countries will only be able to do so if they can successfully shift their regulatory stances toward more open and innovative approaches.

To ensure countries are correctly addressing existing and upcoming regulatory challenges arising from the development of current frontier technologies, these approaches should be built upon a broader set of legal fundamentals, a more collaborative way of organizing, and case-by-case assessments that consider a wider range of digital services, the increasing number of players, and the emerging concerns and risks that have yet to be uncovered. This shift will ensure that both humans and technologies coexist optimally and harmoniously, while constantly striving for the achievement of larger sustainable development goals.

Notes

[1] “Technology and Innovation Report.” United Nations Conference on Trade and Development (UNCTAD), 2021.

[2] High-Level Expert Group on Artificial Intelligence. “Ethics Guidelines for Trustworthy AI.” European Commission, 8 April 2019.

[3] High-Level Expert Group on Artificial Intelligence. “Policy and Investment Recommendations for Trustworthy AI.” European Commission, 26 June 2019.

[4] “National AI Strategy.” Gov.UK, 22 September 2021.

DOWNLOAD THE FULL REPORT

Smart regulation: The gateway to frontier innovation

DATE

Executive Summary

When it comes to the frontier technologies that shape an economy’s development trajectory, information and communications technologies (ICT) regulators have an unenviable task across three challenges:

- Keeping up with the breakneck pace of change that creates new use cases each day.

- Protecting promising players while leveling the playing field for newcomers.

- Supporting new technologies while curbing lax policies that can lead to ethical breaches.

With frontier technologies poised to grow exponentially in the next five years (US $350 billion to $3.2 trillion by 2025),[1] the payoff has never been higher. And neither have the risks. Ill-conceived regulatory approaches can turn the frontier into a wasteland: too harsh, and you stifle innovation; too soft, and you risk moral hazard that disqualifies participation in the global game. Surprisingly enough, the key to successfully gold-mining frontier technologies lies in a thoughtful, proactive approach to regulation; that is, an open and overarching regulatory model to address these new technologies and competitive dynamics, built upon a strong understanding of specific use cases.

1

Frontier technologies show strong promise

Leveraging ubiquitous gigabit connectivity and widespread digitalization, frontier technologies represent key building blocks for current and near-future innovation. As defined by UNCTAD, the 11 frontier technologies include artificial intelligence (AI), Internet of Things (IoT), big data, blockchain, 3D printing, robotics, drones, gene editing, 5G, nanotechnology, and solar photovoltaic (PV). As highlighted in Figures 1 and 2 , UNCTAD reports that the combined market size of frontier technologies is expected to grow close to tenfold from 2018 to 2025, going from $350 billion to $3.2 trillion. These technologies must be developed and combined to unlock new possibilities across various industry sectors (e.g., robotics in healthcare, blockchain in finance, AI in transportation).

While frontier technologies seem to open vast opportunities for countries that take a lead in their development, there are also potential risks and downsides that countries need to address. Overall, governments must be proactive in understanding these technologies and finding the right balance between promotion and regulation. Only a considered approach will allow for safe and privacy-respecting innovation while ensuring continued harmonization with other global technology leaders.

Historically, governments and regulators that have embraced a more proactive approach to understanding emerging technologies and created a framework that promotes the safe development of such technologies have allowed their countries to close the technological gap or to increase their lead.

As an example, in 1950s Japan, proactive policy and regulation from the Ministry of International Trade and Industry (MITI) enabled the country to move to the forefront in the emerging analog electronics industry by leveraging technology transfers from US companies. A few decades later, the US managed to retake and consolidate its lead in developing digital technologies, thanks to ambitious policies pushed by extensive regulatory and financial support from multiple government entities, including the Defense Advanced Research Projects Agency (DARPA). In contrast, governments unable to adapt their regulatory approach to new technologies often ended up impeding innovation, whether in regard to ICT or for more traditional industries.

Drone regulations in the US illustrate a progressive shift over the past years from an extension of aircraft regulations to specifically designed rules. In 2016, the Federal Aviation Administration (FAA) defined various operational limitations and rules for drone usage while also granting exemptions for companies to operate drones for various commercial use cases. This shift helped overcome a regulatory framework that was inadequate and likely to impede the development of drone-enabled services. Consequently, the new regulation enabled the technology and the associated use cases to grow while ensuring advancements respected the safety and security of all.

In contrast, countries that have implemented effective bans on drone usage with no plans to build a more progressive regulatory framework will likely miss out on the ever-changing development of a technology that can have significant positive impact on the economy as well as on consumer welfare.

All frontier technologies are impacted by the regulatory framework that they must follow. However, technologies with the largest estimated market size as well as those that can serve as enablers and accelerators to other technologies are likely to be impacted the most. Thus, there’s a crucial need for a stronger understanding and adaptability from regulators. Technologies like AI, big data, and IoT can be developed for standalone use cases while supporting the growth of other technologies, such as drones, robotics, or 3D printing, and should therefore be subject to a more holistic approach from regulators that considers the key role these technologies can play in unlocking the true potential of other technologies.

2

Challenges of adopting emerging technologies

While frontier technologies are key enablers for innovation and technological progress, the ability to develop and leverage such technologies varies greatly across countries. The key readiness differentiators include ICT deployment, overall skills and capabilities, R&D activities, industry activity, and access to finance.

These disparities in technological readiness emerge from innovation having exponentially amplified inequalities between countries since the Industrial Revolution. Innovation builds upon previously developed technology to unlock the potential that progressively enhances the linkages and dependencies between new technologies. At the same time, this cumulative effect can also be seen in the development of intergenerational inequalities, as newer generations born in areas with low innovation potential find it more difficult to build on newly developed technology to innovate further, making it much more difficult for people already subject to inequalities (from previous frontier technologies and their use cases) to close the gap.

While inequalities within countries have decreased over the last century, inequalities between countries represented 85% of global inequality at the beginning of the 21st century (up from 28% in 1820), according to UNCTAD. This evolution is caused mainly by the exponentially growing gap in GDP per capita and disposable income between countries at the forefront of innovation and those lagging behind in terms of the emergence of new technologies. As emerging technologies play a more prominent role in the global economy, we expect this inequality gap to continue to widen.

As a result, this cumulative aspect of new technology development raises various challenges that countries must address to fully benefit from the opportunities while mitigating their potential downsides.

Regulatory challenges

The first set of challenges technology adoption raises relates to regulation. With the boom of digitalization and connectivity, concerns about potential privacy, security, and ethics breaches from technology companies and products are becoming more and more worrisome for individuals and governments. Designing and implementing efficient technology regulation is particularly complex because it requires constant adaptation to newly developed use cases and applications. Emerging technology also enables new disruptive business models, often leading to challenges with accountability, ethics, and monitoring. Key regulatory challenges for the five major ICT frontier technologies are illustrated in Figure 3.

Some of these challenges are already known and have been addressed to some extent by regulators. In 2016, for example, the European General Data Protection Regulation (GDPR) was one of the first significant actions taken by multiple EU member states in an attempt to better protect privacy and to guarantee technology companies made more ethical use of personal data.

Let’s examine AI, a current key focus for regulation/nonregulation, a bit deeper. This particular technology rapidly transforms the way individuals live, work, and recreate; businesses serve customers and create shareholder wealth; and governments deliver public services. Additionally, AI can play a significant role in maintaining the competitive strength of a leading nation on the global stage while offering an opportunity for challengers to get ahead. Although AI is developing quickly and the benefits are clear, governments are unsure about how to manage its challenges and risks.

Moreover, even though the ethical use of AI is a well-established global principle, there are no clear ways to penalize nonconformance. Legal compliance regarding AI and the data that fuels it is another crucial challenge, especially with different countries having different data regulations. Protecting IP rights and appropriately allocating ownership and use rights in the components of AI can be difficult because traditional IP approaches are based on human creation. Only select leading countries (e.g., the US, Singapore) so far have published formal stands or views on how to address AI regulation.

Unlike traditional software or technology licensing, AI has several unique components that must be addressed differently, including AI solutions, training data, production data, AI output, and AI evolutions. For each AI component, the following considerations take on high importance:

- Who is providing the component?

- Who will use the component?

- How will the component be used?

- Who owns the component?

EU’s approach toward AI is guided by a European strategy and a high-level expert group. The European Commission has published ethics guidelines for trustworthy AI [2] and has followed up with policy and investment recommendations.[3] The commission differentiates between “high-risk” and “non-high-risk” AI applications, with only the former in the scope of a future EU regulatory framework. The commission considers a set of key requirements for high-risk AI applications regarding training data conditions, record-keeping, informational duties, human oversight, and specific requirements for specific AI applications. The commission has put large fines in place for companies that violate the law.

Many challenges associated with such emerging technologies are yet to be discovered and understood. Part of the innovation is self-evolutionary and will leverage AI to learn and improve over time. However, AI is not without flaws, and initial biases in developing the underlying models and algorithms can have major repercussions. An illustration of such a threat is the racial or gender bias that has been embedded, consciously or unconsciously, in AI trainings. Such bias has resulted in higher chances of discrimination for certain populations that, for example, were underrepresented in the training data. The longer an AI algorithm is running, the more complex it becomes to identify causality links and potential flaws in its approach.

Optimal emerging technology regulation must not only be reactive to any innovation in the sector but must also provision for potential upcoming ethical threats. Keeping pace with cumulative machine learning progress as well as settling multiple moral dilemmas will be at the core of regulators’ work, allowing for a deep and complete understanding of how various emerging technologies work and where to draw a clear boundary between what is or is not ethically acceptable.

Whenever a choice must be made between various courses of action leading to outcomes with different consequences, such as identifying what behavior an autonomous vehicle should adopt in an imminent crash situation, regulators will have to tackle these questions to ensure that all future technology is developed on building blocks previously in line with key ethical concerns.

Socioeconomic challenges

The second set of challenges and requirements relates to the socioeconomic aspects of emerging technology. Technology innovation can have significant social benefits for those who can access it. This also means that unequal access to emerging technology will directly impact social equity, as it might exclude certain groups of individuals from the benefits the technology provides.

Companies can also take advantage of these differences in access, as some competitive spaces can be more easily captured and maintained if a particular player has better access to an emerging technology, leading to a dysfunctional market and risks of monopoly, duopoly, or oligopoly. Thus, while innovation should secure enough market advantage to encourage R&D, technology regulators must also ensure that it does not provide regulation asymmetries among players and/or a long-term advantage for leading companies in a way that new entrants cannot respond through adequate means.

3

Regulation approach on case-by-case basis

Different approaches exist across the spectrum of regulatory policies that ICT regulators have introduced (e.g., see “Cryptocurrencies: Current regulatory approaches”), including the following three:

- Prohibitive approach (compliance-based)

- Wait-and-see approach (event-based)

- Proactive approach (risk-based)

As new technology is geared toward decentralization, providers and even users can expect emerging technologies to be largely self-regulated. However, as detailed above, lack of intervention from a regulatory entity can lead to multiple pitfalls that would be detrimental not only for users but also for the economy as a whole. In light of this, we believe that the most efficient approach to regulate emerging technologies is the proactive (risk-based) option.

Risk-based regulation identifies and assesses the risks associated with specific practices or technology use cases and takes them into account when elaborating and enforcing regulations to efficiently prepare and protect against technology threats without impeding technology emergence. The following are the key risks that regulators must assess: the impact on individual and public safety, social welfare, the wider business environment, existing regulation, and the capacity to minimize potential threats of emerging technology use cases.

Interest in risk-based regulation often grows from a larger commitment to incorporating rigorous analysis into regulatory decisions. Regulators around the world now use regulatory impact assessment or cost-benefit analysis to structure decision making and anticipate the consequences of different regulatory options. This risk-based approach to emerging technology regulation is one of the dimensions of an agile regulatory framework that can help governments safely and efficiently unlock new technologies’ potential. This framework’s key foundation is clarity in context, vision, and objectives. This foundation can be secured through three main dimensions:

- An evolutionary and agile approach to regulation. Regulators must acknowledge the fast-paced evolution of emerging technologies, as well as the interconnection of various technologies, that allows for the development of new technology use cases whose implications can only be fully understood once deployed. This requires a shift from “regulate and forget” to a more responsive and iterative approach.

- Leading and coordinating policy. Emerging technology use cases span all industries, and technology regulators must embrace a coordinating role between the relevant government entities and private stakeholders to fully understand and monitor the impact of new technology across sectors and effectively regulate it.

- Committed implementation. Regulators should not only frame regulations but should also take an active role in implementing technologies within the country.

Depending on the risk assessment performed, regulators can then leverage all relevant tools to develop an optimal regulatory framework for any emerging technology use case. As an example, risks of biases in AI leading to higher discrimination can come from either the data set used or from the developed algorithm’s architecture. Enforcing the independent auditing of selected data, mandating publication of test results, or implementing a framework for data management can be efficient regulatory tools to address and minimize the risk of discrimination without hindering the development of AI technology.

Where risks cannot be quantified, an evolutionary approach using sandbox-based regulations is another option. Sandboxes create a space where innovation actors can develop new technology use cases under a regulator’s supervision, allowing for safe experimentation as well as knowledge transfers between private companies and regulators. In the early stages of digital banking development, for example, multiple financial regulators followed a sandbox approach to better understand the potential risks of such technology and address them up front instead of risking a large-scale threat in the financial markets.

4

Paradigm shift for regulators

Adopting a framework such as the one described above is a key step toward the move from traditional regulators to ambidextrous regulators that learn from the past and current contexts to understand technology advancement more fully along with its potential risks, while also looking ahead to better prepare for emerging technologies.

This requires a paradigm shift in the regulator’s perspective in order to embrace a more proactive regulatory role as well as a new technology promotion role. Regulators shifting to a more responsive and iterative approach must prototype and test new approaches through regulatory sandboxes and accelerator development at the national level and via more collaborative regulation on the global level. Regulators must also develop internal capabilities and support deployment of infrastructure to remain up to date in terms of technology foresight.

With increased complexity, regulators must evolve and update their traditional model to consider:

- A wider range of digital services, especially use cases where more than one industry comes into play.

- A larger scale of players, especially those that are large enough to have a significant impact on an entire ecosystem, including the success or failure of other players.

- New regulatory issues emerging from novel technological use cases and business models.

The regulatory model of the future should consider:

- A broader set of legal fundamentals (e.g., content moderation, privacy and data protection, bias/fairness, explainability, norm setting, existential risk).

- A different method that focuses on services rather than infrastructures, avoiding the creation of asymmetries between old and new players and between different industries and ensuring a larger level playing field.

- An “open” model, including a call for inputs from any stakeholder (e.g., consumers, players from other industries).

- A case-by-case regulation, operationalized through a 360-degree assessment and possibly a categorization of use cases that strikes a fine balance between promoting innovation and increasing administrative workloads.

Countries like Singapore are among the global leaders developing forward-looking regulatory frameworks for new technologies. While multiple advanced countries are considering more stringent regulatory frameworks for cryptocurrencies, Singapore has accurately assessed the strategic and economic opportunity represented by the underlaying blockchain technology. As a result, the city-state developed an ambitious regulatory framework aiming to monitor the risks associated with cryptocurrencies without hindering the technology’s development, allowing Singapore to strengthen its position as a global financial innovation hub.

The recently published AI strategy by the UK government that outlines its plan to make Britain a global AI superpower sets out an agenda to build the most “pro-innovation regulatory environment in the world.”[4] The strategy addresses concerns over the novel ways AI can introduce bias into decision making, assesses whether sector-by-sector AI regulation should continue or make way for cross-sector regulation, and pilots an “AI Standards Hub.”

Conclusion

Like all previous major innovative breakthroughs, frontier technologies and their underlying use cases present an opportunity for countries to close the gap or to increase their lead over others. However, countries will only be able to do so if they can successfully shift their regulatory stances toward more open and innovative approaches.

To ensure countries are correctly addressing existing and upcoming regulatory challenges arising from the development of current frontier technologies, these approaches should be built upon a broader set of legal fundamentals, a more collaborative way of organizing, and case-by-case assessments that consider a wider range of digital services, the increasing number of players, and the emerging concerns and risks that have yet to be uncovered. This shift will ensure that both humans and technologies coexist optimally and harmoniously, while constantly striving for the achievement of larger sustainable development goals.

Notes

[1] “Technology and Innovation Report.” United Nations Conference on Trade and Development (UNCTAD), 2021.

[2] High-Level Expert Group on Artificial Intelligence. “Ethics Guidelines for Trustworthy AI.” European Commission, 8 April 2019.

[3] High-Level Expert Group on Artificial Intelligence. “Policy and Investment Recommendations for Trustworthy AI.” European Commission, 26 June 2019.

[4] “National AI Strategy.” Gov.UK, 22 September 2021.

DOWNLOAD THE FULL REPORT